Only The Dreamer Wakes I

On Dreaming

Sometimes I’ll wake in the middle of the night, reaching urgently for my phone in the dark, with my eyes half-open to type in “a wistful bison sitting in a diner, near a window, drinking a beverage from a straw, in the style of Edward Hopper”.

Those prompts would begin to evolve into something like: “highly detailed concept art lithograph of a woman morphing into a cloud, walking through a colorful futuristic cyberpunk dystopian busy bustling city full of mushrooms, Beijing Forbidden City, cinematic environment, Unreal Engine, dark atmospheric macabre poetcore, beautiful light, mystical vintage sci-fi”

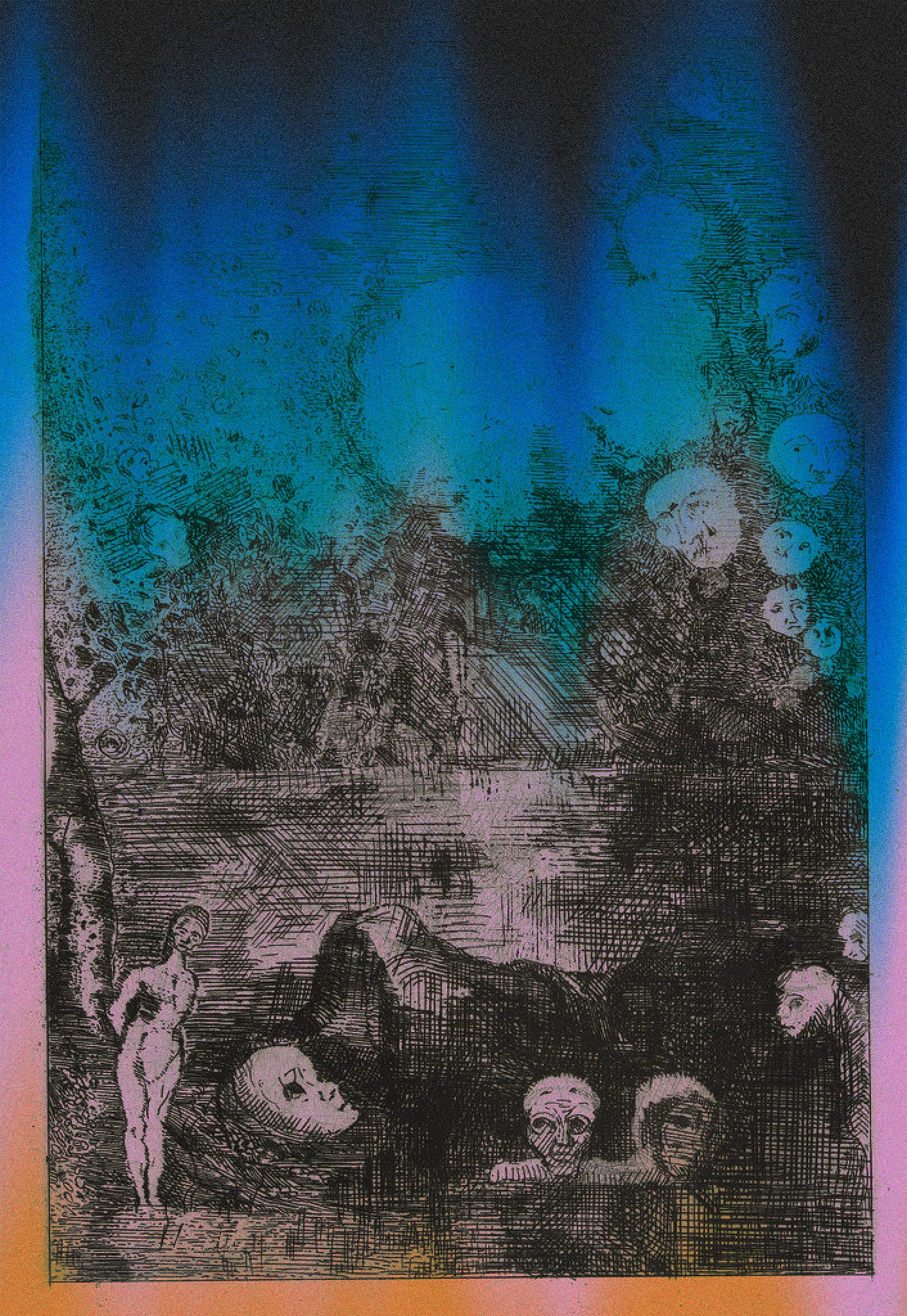

Over a few months of more frequently experimenting with text-to-image AIs, I began to dream differently - farther removed from the familiar, more saturated with absurdity. Or dreams suddenly felt more pressing, and vivid, and like they had to be channeled out into the world, in some form. The aperture of the mind widens, allowing for chaos to flow more freely between the two worlds.

In ancient practices of dream incubation, one lays the groundwork for a fruitful dream primarily by way of: setting an atmosphere or environment (the sacred precinct to sleep within), and choosing a defined intention of what needs to be explored. This is what we’re doing when selecting a prompt, putting together the thought-fragments we need to give something form.

A decade ago, a team of Japanese scientists released a revolutionary scientific paper. Using fMRI imaging, they were able to observe thoughts moving through the minds of zebrafish, a phosphorescent glow dancing through the brain. These patterns eventually provided enough data to visualize what the zebrafish was thinking. Soon, human dreams were being pieced together from fMRI scan data, keywords mapped to ImageNet and WordNet, creating accurate little eerie films - visual representations of the inner workings of a human mind. We’re only a step removed from doing that for ourselves, wandering spatially through concepts we’re always losing.

All dreams are designed to be forgotten, so as to not smudge realities

Our dreams default to evaporating within 10 minutes of waking, into wisps of unrecoverable idea-matter. Upon waking, all the worlds our mind has been wandering through, any presences encountered are promptly forgotten.

The utility here is protecting our sanity, keeping us contained within the rational bounds of reality. Dreams remain a channel for bizarre sensory explosion, a place for our subconscious to make clearer that which we already know, to see connections mired in hallucinations, a realm to access our intuition, an alternate reality or a soft new plane of communication: we spend half our lives in this space. We all dream every single night, whether or not it leaves behind any trace of memory. more on this here

The creation of a neural network feels like building a specific and persistent dreamspace: thickly condensed, frozen and available to explore without constraints of ephemerality. This fresh crop of megalithic generative networks allow us to consult with an amalgam of the entirety of human creative history, or perhaps just a heavy skewing of late ‘90s deviantArt users.

These are structures we could use for immortalization & infinite extrapolation, to create symbolic Horcruxes, or to put aside pieces of memory or seeds of alternate realities – we may leave behind fossils that could grow into entire ecosystems on their own.

Between thought and expression, lies a lifetime.

-The Velvet Underground

Generative machine intelligences can scale up an individual’s creative endeavors, freeing up their creative potential - and exponentially shortening the time from idea to execution.

What’s more interesting is what it might do to our senses and capabilities over time -just as calculators externalized and weakened our capabilities to do mental math, will humans start to miss out on the smaller epiphanies that emerge during the process of creating a piece of art?

What are we thinking vs. what is it thinking? Whose imagination is on display?

In Ted Chiang’s steampunk novella Seventy Two Letters, (taking place in some alt-history-state Britain) automatons are given life by a class of people known as“nomenclators” - who provide specific verbal instructions to confer upon machines a purpose and function. These spoken word key-phrases grow in complexity, based on the actions the unconscious machines needed to embody - surfacing parallels to present-day ‘prompt engineers’.

While pouring a large bronze cast in the shape of an intricate hand, a sculptor in the story argues that automatons with programmed-in dexterity may end up replacing the sculptors themselves. Similar arguments arise today, with artists’ entire bodies of work and imagination reduced to training data - pointillistic samples to be remixed into oblivion. However in this case, machines could quickly rise to the level of collaborator rather than replacement-threat or a mere tool, in the way one uses a rubber duck while programming - an artist and their assembled team of machines together could function as an independent creative house.

The ease of use of generative AI will perhaps just create more artists: When we approach machines as collaborators, we take into account their idiosyncrasies and personality quirks, how each has its own specialization and area of expertise.

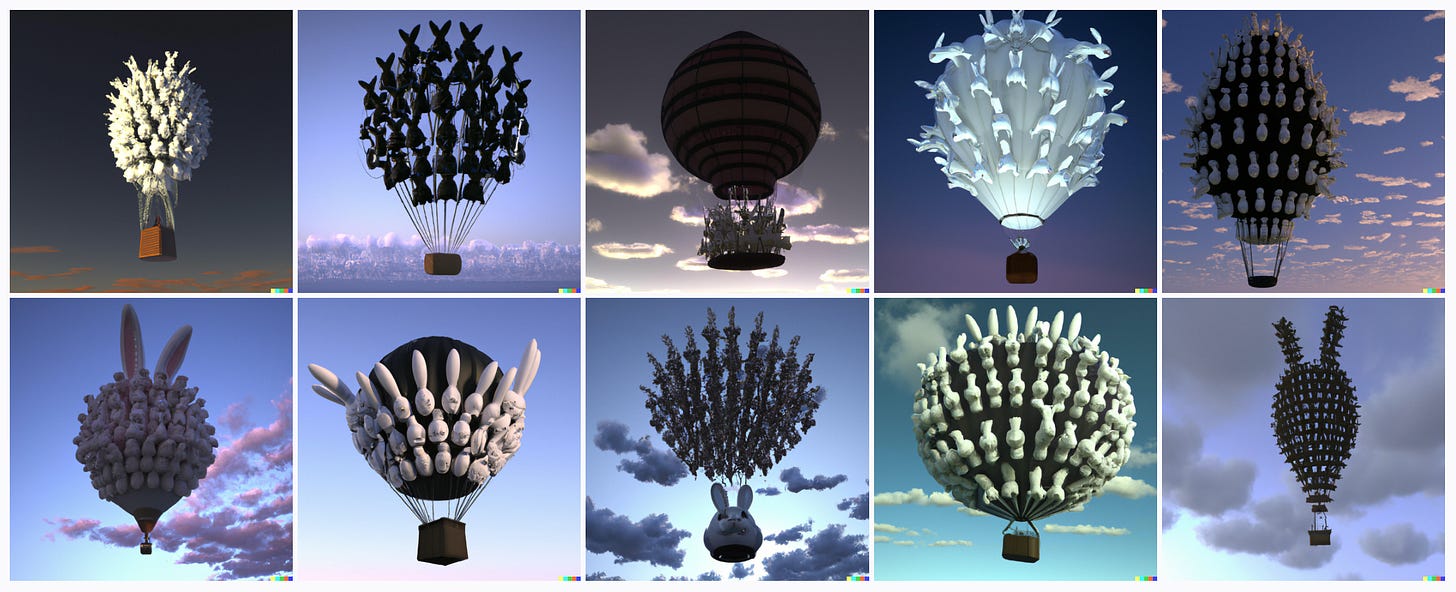

This is how Dall-E and Midjourney see each other: sagely, threatening, expansive:

Everything is about to change: the new age of remixing

“Whenever a skill becomes automated, art moves into new terrains of impossibility. The camera did to painting what guns did to spears. There is no going back.” - David O’Reilly

A lot of alarmist lines of conversation have been triggered in recent weeks, “AI is going to replace artists!” “Art is dead!” and so forth. It appears more as a genre-shift, just as sampling other pieces of music, tiny unrecognizable fragments, paved the way for electronic music.

There’s going to be a shift away from the practice of creating art and visual information as we know it, leading to the emergence of a new art form: of clearer articulation and curation.

A new and pronounced emphasis on refining the skill of holding ideas in your mind with more vividness, and of establishing collaborative dialogue with machines.

While workflows rapidly shift, art is only becoming more accessible and faster, but raising monumental questions of ownership. Most of these networks are trained on infinite corpuses of visual art, some public domain but the majority of scrapings largely without the consent of artists.

Dall-E’s copyright rules have been cloudy during the research preview, you own the rights to any images you generate, to be used in any form other than NFTs. To handle the increases in processing load, it’s going from 10 generated images down to 4, and a system of paid credits. Midjourney’s own moody and artistic style may never compensate the source artists in any way either.

When dealing with a discrete unit like a song being sampled, one could work out a system of royalties due to the original artist. Here we are dealing with the softer realm of influence and inspiration: too subjective to quantify, but enough to make artists justifiably uncomfortable.

The democratization of generative networks will accelerate pre-existing shifts in how we learn: the lack of documentation around these technologies means that we learn primarily by building, and from each other. The instant community that forms over Discord, with incessant bursts of inspiration, moves us from proprietary-everything to an open-book approach of improving upon each other. Building collective knowledge. In a way, we’re recreating the spirit of the ‘90s internet - we’re all hanging out in forums and chatrooms making things together.

A brief history of GANs

When I first started working with GANs (generative adversarial networks) in 2016, I was primarily using them toward extrapolation. One thing that AI is optimized for is uncovering patterns: so surely it could conjure up the next possible concept, right at the outer edge of what already existed.

Training a model was a mercurial process spanning multiple days, leading to the moment of sheer magic when it would eventually spit out 64x64px rough images. These were the first blurry thoughts we could see, and resolution and precision have increased exponentially in recent years.

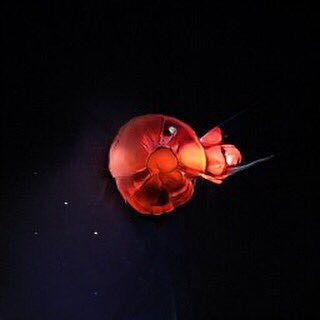

These years were spent searching for glimpses: predictions and odd sketches of something new yet plausible, discovering creatures that lived deep within latent space: what were the interstitial concepts hiding in the spider-like threads between existing ideas and images? From datasets assembled from scientific illustrations of plants, could we see traces of synthetic plants and future mutations? What would an even mix of Alexander McQueen and Oscar de la Renta’s aesthetics look like? What kind of creature might exist in between a jellyfish and a clock?

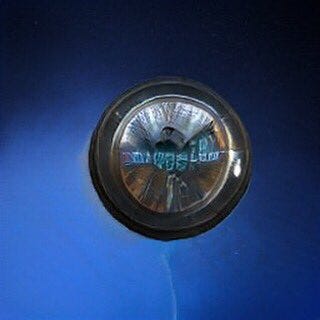

Jellyfish-clock: via Midjourney vs. 2018 Google’s BigGAN

Present-day models are faster and more vividly detailed, and lend themselves better to extrapolation of individual styles and the kinds of impossible collaborations I had always been looking for: blends of sculptures by Rodin and Noguchi, juxtaposing far future events into the past, alt-histories and scenes from far-flung universes. I input a description of the kind of person I tend to fall in love with, and Dall-E generated a number of images of men who looked like they played in Nickelback.

Inpainting and outpainting reveal what might lie beyond the limits of a frame: extending canvases to reveal container worlds. I spent the early days of Dall-E turning movie posters into tapestries, feeling unconstrained by edges, asking neural networks to give form to imaginary creatures that might be standing right behind me just out of frame in a photograph.

A few explorations:

Switching formats (text to image to video) : Some literary paragraphs are each like individual beautiful paintings, and deserve to be repurposed into them. Some people consume information better when it’s visual rather than through written words

Illustrating a book of sci-fi short stories

Stock images: For quick mockups (preferably ones where it doesn’t matter if the humans have demon-faces up close), I’ve been relying on Dall-E to generate all my stock photography

Experimenting with unlikely materials

Creating inspiration / patterns for fashion designs for an upcoming equestrian fashion line

Creating inspiration images for hand-drawn ink illustrations and linoprint carvings

Maintaining a more visual dream diary

Generating textures for 3D models and AR objects

Jewelry design: an opal ring I’m having made

Dreaming while awake

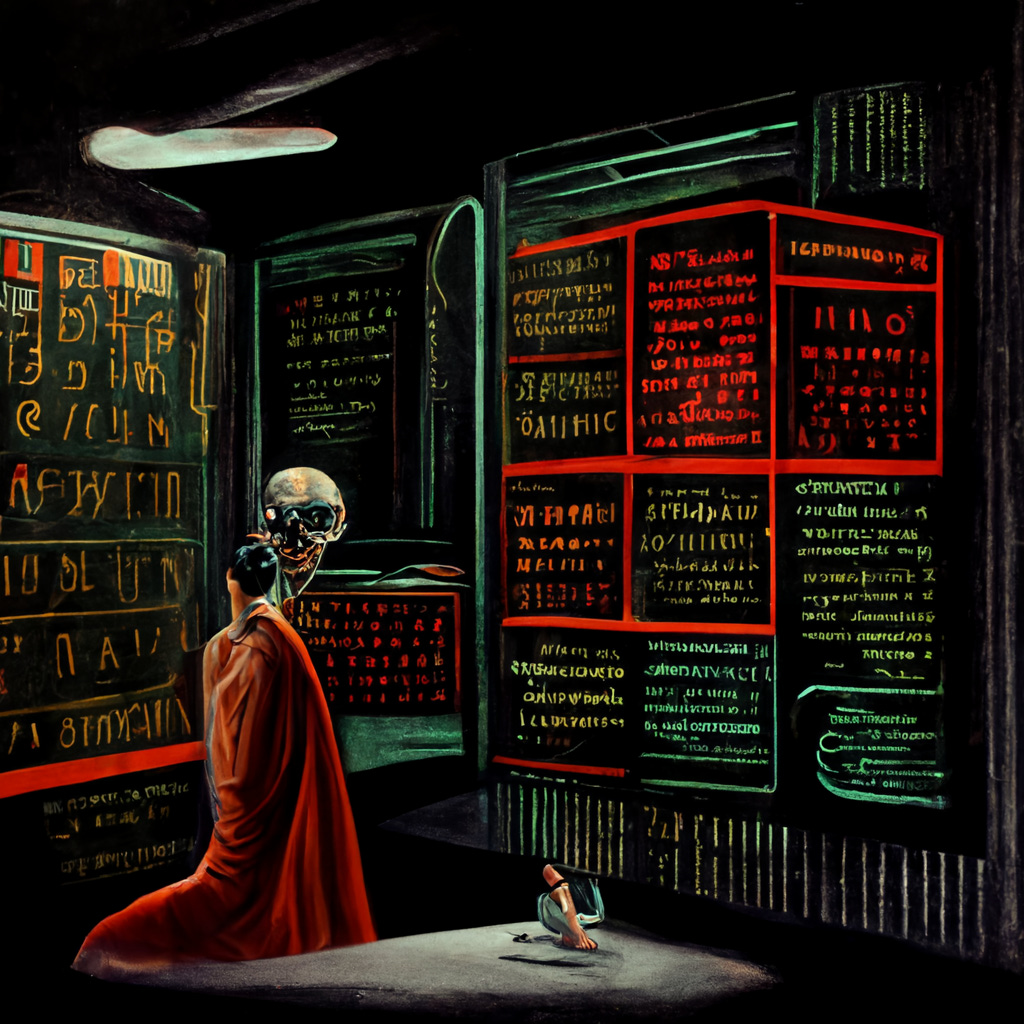

When you see visuals in a lucid dream, you will never be able to see the time - digital clocks tend to behave erratically. The same is true of neural networks: you can only see time as though in a dream, or text at the level of a timid scrawl.

Some of the first things I tried when I got access to these tools: generating a new set of hieroglyphic symbols for modern concepts (eg. In & Out burger), exploring what the color of human souls looked like (similar aesthetic to the Northern Lights). Given that any image may lie behind the right combination of words, there might be a way to even find yourself: What is the prompt that leads to your form - where do you exist in the eyes of a neural network?